table of contents

We live in tumultuous, but interesting times. The rich have gotten richer, the poor—poorer and innovators have devised innovative ways to work through the disruption that has been brought about by the coronavirus-induced pandemic. The pandemic has also brought about a battery of changes to our lifestyle, beginning with many of us learning how to cook complex dishes from scratch, others finding newer hobbies, or even spending time to learn something new about ourselves. During the pandemic, many of us have also finally found the time to curl up on our couches, turn into couch potatoes and binge-watch Netflix originals till we run out of bandwidth.

Sudden surges

Although most services such as Netflix, Amazon Prime video and many other video and audio streaming service providers have highly scalable systems that can withstand sudden surges and spikes in usage, there are chances that these services might experience outages which can result in user frustration and in some extreme cases of long-term outages—abandonment of the platform too. Complex, large-scale distributed systems such as Netflix and Amazon Prime video and many others that potentially have millions of users must be tested effectively and extensively keeping in mind surges and spikes.

However, unusually heavy spikes such as those caused by the pandemic have been unprecedented and have possibly not been in any company’s testing team kitty.

Continuous integration delivery and production

The problems of CI/CD and resolving the problems of constantly engaged systems

Companies like Netflix have constant updates to their system, which are continuously tested and delivered to their live platforms. For this, Netflix testing teams create hundreds of thousands of tester accounts every day, each being used in thousands of test scenarios to avoid any shortfalls.

This has caused the testing of Netflix to move from a manual testing regimen that would work on a test system before making it live to a large, distributed automated testing of Netflix client and server applications running at scale in production. To facilitate this, testing at Netflix has gone from a low-volume manual mode to a continuous, fully automated, voluminous mode where nothing is left to chance.

An imaginary scenario with real implications

Imagine this—you, and millions of others are at nail-biting, suspenseful climax in the story and suddenly—boom! Netflix is now offline. This would send alarm bells ringing at Netflix HQ and testing SWAT teams would suddenly fly in from your windows to analyse what went wrong. However, thankfully, this does not happen often.

The Goal

The goal at Netflix is simple—to be online for their users 99.99% of the time. Although Netflix has a pretty decent track record of staying online, they do occasionally encounter glitches that put the system off track. One of these incidents occurred when a development team at Netflix deployed software that impacted the large infrastructure at Netflix negatively, causing widespread disruption in services and thousands of unhappy customers.

This led to Netflix scrambling to create a fix that would essentially resolve the issue in few hours, but also gave Netflix some food for thought—that their testing regimen was inadequate and ineffective for such a large, distributed, user-facing system.

What could go wrong?

What happened at Netflix was an oversight on various levels. A new piece of code that was designed to clean up unused resources was actually being tested on the production server. This oversight caused two major problems due to bugs in the code:

- The first bug caused a dry run mode flag in cleanup that was to protect the actual cleanup to be interpreted incorrectly—reversing its effect. This was caused to a poorly written unit test that could have caused this issue to be caught in development.

- The second bug was in a piece of code that checked if a resource was actually unused. The conclusion of this check overlooked some cases that existed only in production.

The combination of these two bugs caused a removal of key resources in production—resulting in the actual outage at Netflix.

Preventing these problems

Preventing or reducing the incidents of these problems leads to a common dilemma

Should testing be done in a test environment or in a production environment? Although most of us would advocate testing to be done in pre-production so that actual customers are not impacted, some would advocate testing in production to ensure that code is running well in both test and prod. The reality of the scenario is that the code should be tested in all three situations: dev, test and prod. The challenge faced by Netflix was to devise an effective methodology that helps in deciding why, when and how to test in these environments.

This also led to another set of questions

- Is the test environment a safe and complete mirror of our production environment?

OR

- Is the test environment the latest build with features that others might need to integrate with?

The result of this was the common scenario of having overtly complex and numerous test environments.

The answer

The answer to this problem that was creating from thinking of a fix to the existing problem was simple—end-to-end automation that would replicate thousands of scenarios without problems.

This answer, however, came with its own set of problems—finding a scalable solution to creating a production-like pre-production environment that does not require cloning production entirely and resulting in a massive investment requirement as well.

Another problem was that pre-production and production usage patterns could be completely different from each other. Traffic is also thousands of times less when compared to production.

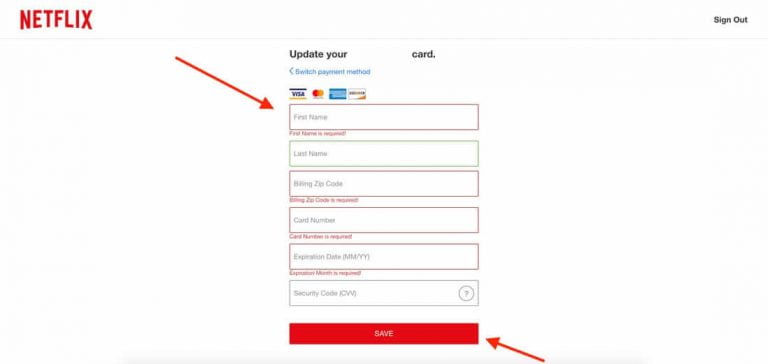

Testing payments

Testing payments was another colossus altogether. Instead of testing payments in production using real money, it is better to create fake MOPs and fake transactions exercised on them in sandbox accounts that does not overburden the existing payment systems in place.

The approach

Of the thousands of possible approaches, Netflix chose production capture and replay to scale their test to be as close as possible to prod.

A large number of requests from customer devices was taken from persistence and duplex-replayed them in test after they were stripped of their personally identified information. This caused tests to become real-world scenarios. This also helped in identifying numerous corner-case bugs that were previously unknown.

The bugs identified were routed back into functional and integrated tests via a schema. This also helped in gaining confidence on quality feature migration and helped to accelerate change velocity. This also gave way to an interesting learning:

All the basic duplex tests could be run in PRODUCTION through tester accounts. However, prod capture and replay duplex tests were limited to the test environment because replaying in production would harm actual customer data with reissue of requests.

Hastings says. “And instead tragically it is a biological one, so everybody is locked up and we had the greatest growth in the first half of this year that we ever had.” With a market capitalization of around US$230 billion, it has been vying with Walt Disney since March for the title of the world’s most valuable entertainment group.

Masked and refreshed data could safely be used to replay requests in the test environment after a time delay. This focused our interest on the data set and not the production environment. Although this was not totally as stable as production, but gave us a good idea of how it could be.

Failing is important in testing. Failures help test teams to identify real issues in downstream implementations. To mitigate this, all functional validations were to run real canaries in production, essentially exposing a small percentage of actual customer traffic to both versions of the API under test.

Running canary analysis algos on the metrics that were gathered from these implementations and a compare-verify regimen would check if client and server metrics were equivalent. This would help to capture failing request logs from the canaries and would help to debug and triage issues better.

Learnings

Learnings from such an approach are manifold.

- The first one would be to understand that test and prod are different, but their differences must be embraced to utilize the capability of both.

- Although testing is good in a sandboxed environment, testing in production is important for such implementations.

- Solving the problems in either environment can go a long way in ensuring test success

- Stay on the lookout for rethinking your testing strategy. Even if it may come at an extra cost, the end result would be worth it.

- Find a pragmatic testing shape that is right for your company—do not look for a textbook shape that fits in.

- Start production simulation and chaos experiments—these will help to validate your functional and resiliency testing capabilities for the future.

At Netflix, chaos testing is done at scale in production. Testing everything from fire raining from the sky to aliens killing their servers, they leave nothing to chance. If they haven’t, why should you? The testing teams at Volumetree are experienced, reliant and know where to hand out the red flags. Give your software the quality edge it needs. Schedule a consultation with our test consultants today!

navigate to this website https://skymoney.net/

[url=https://top-casino-rus.ru]top-casino-rus.ru[/url]

В 2025 году российские пользователи проявляют повышенный интерес к онлайн-развлечениям, включая легальные игровые платформы. На нашем сайте представлен актуальный рейтинг проверенных сервисов, соответствующих требованиям законодательства.

Критерии отбора

В наш обзор включены только ресурсы, которые:

Имеют международные лицензии (Кюрасао, Мальта)

Поддерживают рублевые платежи

Предлагают SSL-шифрование данных

Имеют положительную репутацию среди пользователей

Топ-3 легальные платформы

“Клуб азартных игр” – лицензионный проект с ежедневными турнирами

“Игровой портал” – платформа с прозрачными выплатами

“Развлекательный центр” – сервис с системой ответственной игры

Важная информация

Согласно российскому законодательству:

Организация азартных игр в интернете запрещена

Доступ к международным платформам ограничен

Игра на незаконных сайтах влечет ответственность

Альтернативные развлечения

Мы рекомендуем рассмотреть легальные варианты:

Официальные лотереи (Столото)

Социальные казино без реальных ставок

Игровые приложения с виртуальной валютой

Важно: Напоминаем о рисках игровой зависимости. Если вы или ваши близкие столкнулись с этой проблемой, обратитесь в специализированные центры помощи.

Для получения полной информации о легальных игровых возможностях в 2025 году посетите сайт по ссылке.top-casino-rus.ru

кликните сюда

[url=https://bs02site2.at/]блэкспрут зеркало рабочее[/url]

подробнее https://kra38l.at/

выберите ресурсы https://kral38.cc/

такой https://kra38l.at/

Продолжение [url=https://t.me/n_o_v_a_marketplace/]нова маркетплейс личный кабинет[/url]

ссылка на сайт [url=https://vinscan.by/]история дтп по vin[/url]

нажмите, чтобы подробнее https://kra40a.at

смотреть здесь

[url=https://kra-40at.at/]kra40.cc[/url]

веб-сайте

[url=https://kra-40at.at]kraken shop[/url]

другие

[url=https://kra-40at.at]kra40 cc[/url]

проверить сайт

[url=https://kra40at.at/]kraken зеркало[/url]

каталог [url=https://krk38.at]kraken вход[/url]

Главная [url=https://krt38.cc]kraken вход[/url]

опубликовано здесь [url=https://krt38.cc]какой kra[/url]

кликните сюда [url=https://krk38.at]kraken[/url]

he has a good point [url=https://sky-tide.com/]best brokers for trading[/url]

check this link right here now https://jaxx-wallet.com

blog here https://kelvanogear.com

подробнее

[url=https://arkadacasino58.com/]arkada[/url]

Читать далее https://kra43at.cc/

go to these guys https://jaxx-web.org

Click This Link https://web-breadwallet.com

site here https://web-breadwallet.com/

my review here https://jaxx-web.org/

visit the website https://toast-wallet.net

why not check here https://jaxx-web.org